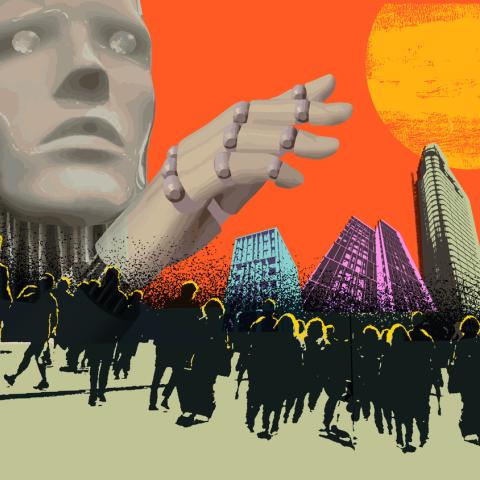

People have morals; machines have objectives – the AI hazard

For the last hundred years or so, from Frankenstein to Ex-machina and Blade Runner, popular culture has warned us of the moral hazards of creating new or artificial intelligences. In the last year, generative AI has moved with spectacular speed from the realms of science fiction to the ‘street’ with the latest iterations of Chat GPT and Microsoft’s Bing AI. In fact, the development of AI has moved with such speed that many big tech gurus are now advocating for a pause or at the very least some serious restraint. Only this week the creator of ChatGPT, Sam Altman admitted in a senate committee hearing that tech companies are in danger of unleashing a rogue artificial intelligence that will cause “significant harm to the world” without urgent intervention by governments.

Senator Richard Blumenthal began that very same senate committee hearing with an AI-generated speech read by a bot that had copied his voice. It said: “Too often, we have seen what happens when technology outpaces regulation.” Whilst the invention of things like the steam engine may have propelled humanity towards the next stage of its development, the question of whether it was good for the planet is much more open ended.

The modern global economy is in many ways, on a relentless quest for (often short term) ‘value creation’, using ever more powerful technologies to exploit our environment so that we have found ourselves in a situation akin to the boiling frog syndrome where we (humanity) are pushing the planet to towards systemic collapse.

Whether you manufacture footwear, grow tomatoes, work in HR or financial services, machine learning tools that process vast amounts of data in the blink of an eye have been hailed as a key ingredient in the success of any business. Indeed, AI tools are being used to solve ESG issues themselves. But is AI really compatible with a truly responsible business or the concept of ESG, or are they actually one of the biggest emerging ESG and reputational risks. Hearin lies the rub, People have morals; machines have objectives. Currently, that detachment is at the crux of the problem with AI.

Looking at AI through the lens of ESG risks:

E – From an environmental perspective, AI is already problematic – the carbon footprint and energy consumption from storing and processing data is vast and growing exponentially on a daily basis. Furthermore, the supply chains that feed them is often opaque and can have harmful and far-reaching environmental impacts, from mining rare earths through to their eventual disposal. Furthermore, will AIs built to optimise business processes really be ‘thinking’ about environmental consequences in a way that an organic life form will? Many AIs are trained to align with human values (which could be interpreted as protecting human life) but since humanity is also dependent on an extremely complex natural ecosystem that we do not yet fully understand, how can we expect an AI to?

S – Decisions made by AI are already starting to permeate every aspect of our lives. There is a growing body of examples where AI led decisions have had disastrous consequences for humans. AIs have been shown to discriminate against certain groups as a result of unconscious bias from the original programmers or even just unaccountable decision making or reasoning by the AI.

G – How transparent is AI decision making and who is accountable (legally) for its decision making, does the SLT of a business really know what AIs do and why? From a governance perspective most management teams and employees lack of technology skills to properly ensure that AI are used properly and responsibly. What’s more, most companies will outsource or white label AI products creating an additional layer opacity. How can we be sure of the ethics and technical ability of your AI provider especially if you are a small business that doesn’t have the expertise?

From a reputational perspective perhaps the most alarming of them all is the law of unforeseen consequences - the yawning gap between our ability to innovate and our ability to foresee the consequences. Unaccountable decisions are proposed by AIs every second of every day and this will only increase with time as they become more powerful. They potentially have the power to amplify mistakes to a truly global level, with incredible speed and influence billions of people’s behaviour. This is why we need to listen to people like Sam Altman. Time is something that is in short supply when it comes to people’s lives and the climate crisis. The answer has to be more haste and less speed.